The last day of January 2019 was sunny, yet bitterly cold in Romford, east London. Shoppers scurrying from retailer to retailer wrapped themselves in winter coats, scarves and hats. The temperature never rose above three degrees Celsius.

For police officers positioned next to an inconspicuous blue van, just metres from Romford’s Overground station, one man stood out among the thin winter crowds. The man, wearing a beige jacket and blue cap, had pulled his jacket over his face as he moved in the direction of the police officers.

The reason for his camouflage? To avoid facial recognition technology that had been equipped to the blue van surveying the street around it. Disgruntled Metropolitan Police officers pulled the unnamed man aside to question him. “If I want to cover me face, I’ll cover me face,” he said, before police took his picture and issued him with a £90 fine for disorderly behaviour.

The whole incident was captured on camera by BBC journalists producing a documentary and campaigners from civil liberties group Big Brother Watch. Police on the scene claimed the man was stopped because he was “clearly masking” his face from the cameras. “It gives us grounds to stop and verify,” one officer said.

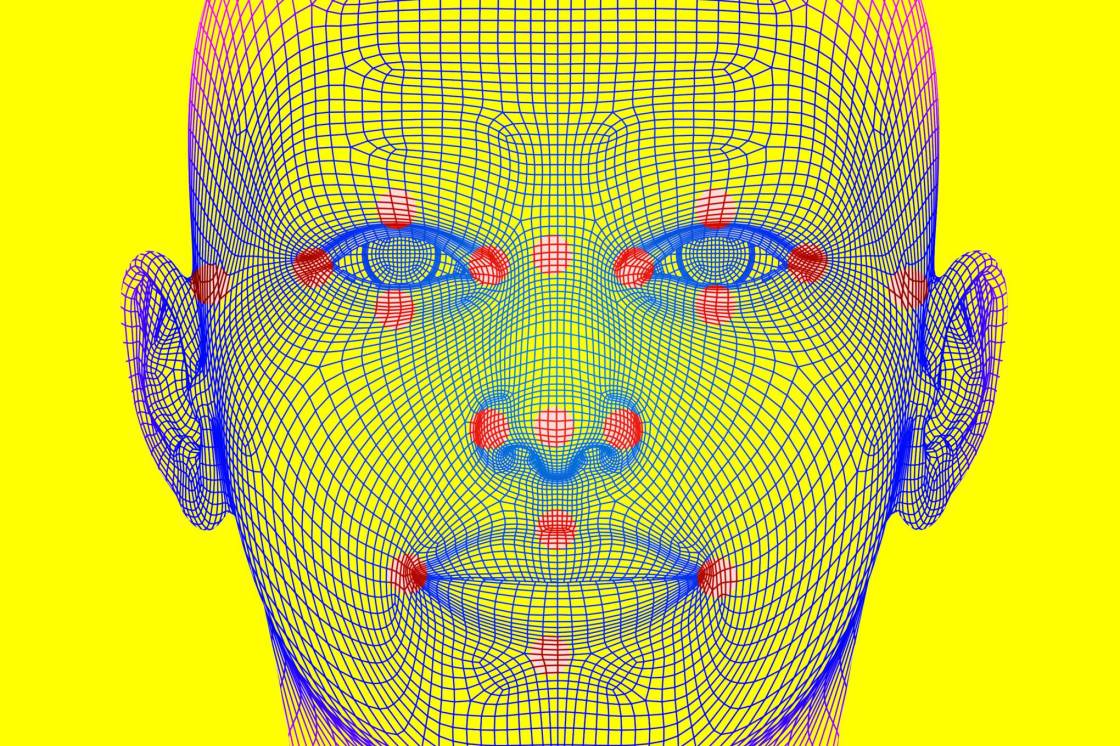

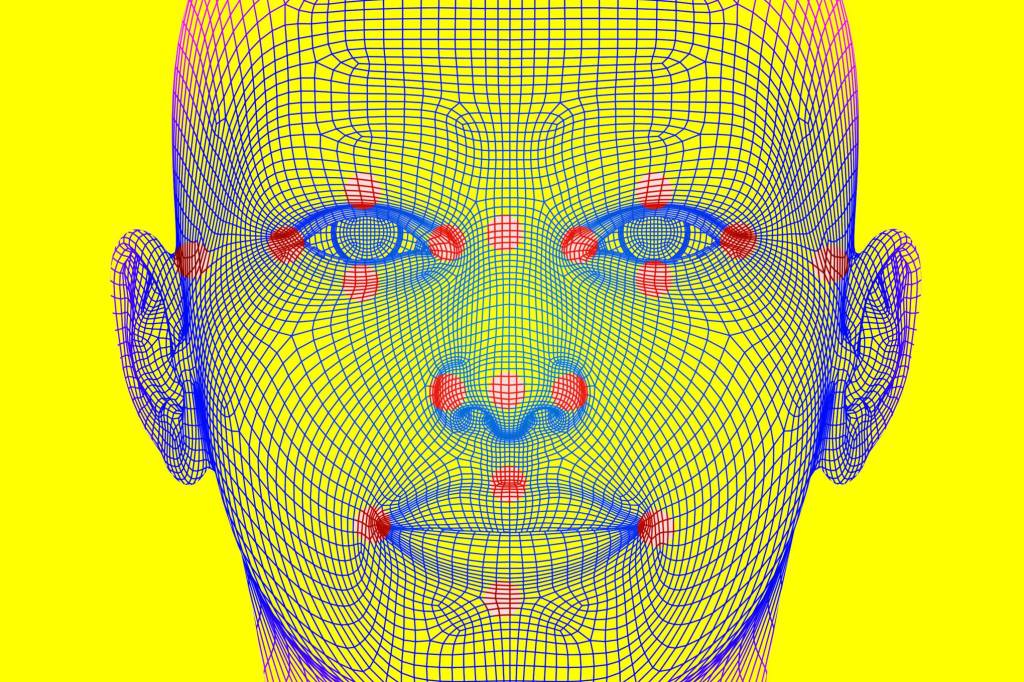

The automatic facial recognition system used by police uses cameras to capture images of people’s faces. When a face is detected a biometric map of the points on a person’s face is created and checked against an existing database of images. Police can define the level of similarity needed between a newly created biometric map and those in their existing data. Once a face has been flagged as a match officers are able to manually review the finding. In the UK, 20 million photos of faces are in police databases.

Although only three people were arrested during the Romford trial, the encounter underscores the rapid growth of facial recognition systems in public places and their conflict with human rights laws. Police forces around the world – most notoriously in China – are eager to use the technology to help pick faces out of crowds under the guise of greater efficiency and the aim of keeping people safe. But at the same time, lawmakers are scrambling to keep up with the capabilities of an era-defining surveillance system.

The divisive nature of facial recognition led to San Francisco, in May 2019, becoming the first US city to ban its use. (City officials voted 8-1 in favour of the block). Tech giants too, have also started to take a stance against the technology, often at the risk of endangering their own bottom lines. Amazon has called for federal regulations on its use and Microsoft has argued it has a “potential for abuse”.

But in the UK, the east London trial is far from unique. In recent years the Metropolitan Police has tested its use across the capital, a force in Wales has scanned the faces of people attending football matches and in Leicester police have used it at a music festival. Facial recognition’s use has reached a critical acceleration point: if left ungoverned, widespread rollouts could see cameras routinely used across cities.

/https%3A%2F%2Fwi-images.condecdn.net%2Fimage%2FvG1j7zKR09E%2Fcrop%2F405%2Ff%2Fgettyimages-1027164458.jpg)

The growing ubiquity of facial recognition has an unlikely enemy: Ed Bridges. The university office worker, who is a dad of two and a dedicated football fan, has lived in Cardiff for 20 years. There’s very little that’s remarkable about Bridges. But this hasn’t stopped him from coming under the lens of the facial recognition cameras of South Wales Police.

Twice in the last two years Bridges has been photographed by the cameras attached to police vans, as the force has conducted the UK’s largest trial of facial recognition technology. The system, which is underpinned by tech called NeoFace from Japanese company NEC Corporation, has been used on dozens of occasions – scanning thousands, or tens of thousands, of faces each time.

Bridges says the first time he saw the cameras – while Christmas shopping on Queen Street, Cardiff’s high-street, in December 2017 – he was confused by their presence. But it was the second time, as he peacefully protested at an arms fair, they really bothered him. “I felt that it was unacceptable,” Bridges says of the protest at Cardiff’s Motorpoint Arena on March 27, 2018. “The first time you knew it was there was when you were close enough to it to already have your image captured, which I found discomforting.”

In May, Bridges, after being approached by human rights group Liberty, started legal proceedings against South Wales Police demanding the technology should be banned. The case may be a defining moment in the UK’s use of facial recognition. It offers the first chance for judges to decide whether the current systems being used break privacy and equality laws, as well as providing guidance on what the next steps should be. (Any decision is still months away; court appeals may then follow). Whatever conclusion is reached, the case will undoubtedly have an impact on a second legal challenge, launched by Big Brother Watch, against the Met Police’s use of the same technology.

Megan Goulding, Bridges’ solicitor and the head of Liberty’s tech and human rights litigation, says there are three key areas where police use of facial recognition breaks the law: the European Convention on Human Rights (ECHR), UK privacy laws and national equality laws.

“This is inherently disproportionate, this technology, because it’s intended to scan thousands, if not hundreds of thousands of people and take their biometric data without their knowledge or consent,” Goulding explains. Liberty estimates that during South Wales Police’s trials more than 500,000 people have had their faces captured – five faces can be recognised in a single camera image, with 10 frames being recorded per second. The technology doesn’t store people’s faces if there isn’t a match against its programmed databases; if there is a match the image is stored for 24-hours.

Liberty argues the technology’s use breaks Article 8 of the ECHR, the right to privacy, as it isn’t necessary or proportionate for the faces of thousands of people to be captured. Facial maps created by the system are likened to DNA or fingerprints as they collect biometric information, unlike CCTV which only captures still images of a person’s identity. Like fingerprints and DNA, it’s not possible for a person to change their facial features.

While facial images aren’t retained by police, Goudling told judges over a three day hearing at Cardiff Civil Justice and Family Centre in May that the intrusion into people’s privacy happens as soon as they have their face processed by the cameras. A similar argument has been made by UK’s data protection regulator, the Information Commissioner’s Office (ICO). In a legal intervention in Bridges’ and Liberty’s case the ICO said the conversion of facial images to raw information “involves large scale and relatively indiscriminate processing of personal data”. This could have a “serious interference” with privacy rights, the ICO added.

The case against South Wales Police doesn’t just focus on European-level rights, it is also trying to convince judges that national UK data protection laws have been violated. It’s claimed both the Data Protection Act 1998, which was in place when Bridges was Christmas shopping, and the Data Protection Act 2018 that came into force as GDPR was introduced, have been breached by the data collection.How to hack your face to dodge the rise of facial recognition tech

South Wales Police hasn’t shied away from the fact it has been using facial recognition technology. Scott Lloyd, the force’s head of the technology has regularly tweeted about the successes the system has had. On June 22, 2018, he said facial recognition had arrested someone who was wanted for assault. Two days later it was observing thousands of music fans attending an Ed Sheeran concert. (It’s also been used at other major events, such as the Champions League Final in 2017).

South Wales Police says that more than 450 arrests have been made using its facial recognition systems. However, only 50 of these were made with the real-time AFR Locate system. It says images captured by the cameras can be compared against a custody database of 500,000 images. The police force has also published a website that explains how its technology works. During the time it has been used publicly, the algorithms have been updated to become more accurate it claims.

After the close of Bridges’ hearing, South Wales Police’s deputy chief constable Richard Lewis issued a brief statement saying the analysis of the systems used were welcome and a “significant” number of arrests had been made during its trials. “The force has always been very cognisant of concerns surrounding privacy and understands that we, as the police, must be accountable and subject to the highest levels of scrutiny to ensure that we work within the law,” Lewis said. During the case Jeremy Johnson QC, representing the police, told judges that the use of facial recognition didn’t breach people’s privacy rights and is similar to the use of CCTV.

Liberty disagrees. The group argues that police use of facial recognition has been indiscriminate, saying that the tech has primarily been used at large events or in areas with high footfalls and not to specifically help locate any individual suspects. One of Goulding’s central arguments is that there are no published criteria of who should be included in the system’s watchlist of individuals. Effectively the system captures thousands of faces with the hope of identifying some people in its dragnet. Liberty’s legal documents suggest there’s nothing to stop police including images from social media in their systems in the future, although they are not currently used. The confusion around who is included on watchlists was highlighted during a 2017 Met Police facial recognition trial at a Remembrance Sunday service. On the police’s list of faces its machines should look out for were 50 people known for “obsessive behaviour” towards public figures.

For Goulding, Bridges, and Liberty, there are questions about the effectiveness of facial recognition when used by police. There is currently little evidence showing investment in the technologies are proportionally worth the time and resource spent. A review of South Wales Police’s systems, linked to on its own website, says there haven’t been any conclusive findings that the systems have reduced repeat offending or led to greater community cohesion. “The ‘headline finding’ of this research is that AFR technologies can certainly assist police to identify suspects and persons of interests, to both solve past crimes and prevent future harms,” the report says, before adding the technology is not a “silver bullet” to help police solve crimes.

But the headline findings obfuscate the more critical elements of the report. The document, produced by academics from Cardiff University, stressed the unexplainable nature of the algorithm, a lack of law governing the technology’s use and ethical concerns. Facial recognition technologies have a well-documented history of handling images of non-white and non-male faces poorly. More than once it’s been found Amazon’s technology – which police forces in the US have been interested in buying – includes gender and racial bias. In one test the system disproportionately confused members of the US Congressional Black Caucus with images on a mugshot database.

Within Wales Liberty has argued police breached the Equality Act 2010 because it didn’t consider the potential impacts fully before using the technology. The issue is one Bridges has become more aware of as the proceedings progressed. “As a white male it is very good at scanning my face, which I suppose I should take some comfort from because I am less likely to be improperly stopped because of a false match,” he says.

“If I was a woman or had a non-white face I think I would feel very uncomfortable about the fact that this technology that police are putting so much faith in is particularly poor at capturing my image properly.”

/https%3A%2F%2Fwi-images.condecdn.net%2Fimage%2FJmZOOEMEEOQ%2Fcrop%2F405%2Ff%2Fcctv-camera.jpg)

The growth of facial recognition technologies shouldn’t come as a surprise – the systems aren’t new in any way. In 1973, Japanese academic Takeo Kanade published a 100 page thesis that described one of the earliest efforts to use computers to identify the faces of individual humans. Kanade explains that until then the emerging field, which would later be known as computer vision, was focussed on devices recognising characters in written text.

In Kanade’s test his machines were put to work highlighting the facial features – nose, mouth, eyes and so on – of people in 800 photographs. A subsequent test saw it attempt to recognise individuals based on two different photographs of them. “In the identification test, 15 out of 20 people were correctly identified,” the Kyoto University academic’s paper says. The tests were completed using static, high-quality images, and weren’t representative of the real-world difficulties that the automated facial recognition systems being used by police around the world face.

Over the last half-decade rapid advances in machine learning and computing power have allowed facial recognition to reach the stage where it can be used on crowds of thousands of people. (Taylor Swift’s security team used the technology in the privately owned Rose Bowl at the end of 2018).

But lawmakers have failed to keep-up with the pace of technological advancement. There are no direct laws in the UK that state how automated facial recognition should be used – there are clear guidelines stating how other biometric data, including fingerprints and DNA should be handled. The lack of oversight of facial systems has allowed the freedom for trials of technology to be conducted, but at the same time contributed to the creation of Bridges’ case.

At the start of June this year the UK’s Law Society, which analyses legislation and acts as a trade body for solicitors, issued a report saying the ways some facial recognition systems are being used “lack a clear and explicit lawful basis”. The Law Society comments have not been the only ones. Deputy information commissioner Steve Wood has said the ICO is conducting an “urgent” inquiry into the use of live facial recognition tech.

It isn’t just automated facial recognition systems that have flown under the radar. Uncertainty also exists around the millions of facial images that sit in UK police databases.In 2012 the High Court ruled police uploading millions of custody images, including those of people not charged with crimes, is unlawful. They were told the images could only be kept for another six years.

Discussions about how images should be removed are still ongoing and a Home Office analysis in 2017, which took five years to produce, said 16 million of these images had been used within facial recognition systems. These systems are different to the live recognition that’s being used on the streets but have drawn criticism for how much data has been kept. “It is unjustifiable to treat facial recognition data differently to DNA or fingerprint data,” parliament’s science and technology committee chair Norman Lamb MP said after conducting a review last year.

The topic has provoked calls from the UK’s biometrics commissioner for laws to be introduced that govern how facial images are used. “It does not propose legislation to provide rules for the use and oversight of new biometrics, including facial images,” commissioner Paul Wiles said of the government’s proposed biometrics strategy, which is comprised of just 27 pages.

While any government regulation on facial recognition technology is slow to arrive, the capabilities of the systems are rapidly improving and adoption is growing. Bridges’ court case comes as the broader idea of facial recognition technology is becoming normalised with its inclusion as a security feature within iPhones and other devices. Goulding worries that if no action is taken against real-time facial recognition in public spaces then it could impact society as a whole. She says: “It’s going to make people self-censor where they go, or who they go with. And that will fundamentally alter how we interact with public spaces and with the state.”

All Rights Reserved for Matt Burgess