How much do we really know about the spaces we inhabit, about the systems chugging along in the background, keeping us moving through our everyday lives?

There’s actually quite a lot, if you know where to look.

With the rise of the Internet of Things (IoT) enabled devices, our spaces can communicate with us. We can tap into this flow of spatial data to provide useful experiences, connecting people with places like never before. IoT data is all around us, but for the most part, we’re in the dark — what we need to know is buried underground, behind walls, in floors where we can’t access it.

As our systems evolve at a rapid pace and become entangled, we need to be able to keep these systems humming smoothly. We need to find an easy and accessible way to visualise data, so we can use this data to our advantage.

To test the viability of an IoT connected future, Telstra Labs constructed a purpose built prototype device — the “IoT Water Pump”.

/https%3A%2F%2Fmiro.medium.com%2Fmax%2F3840%2F1*RHk1WgNSR9rfrp68Hn7_aQ.jpeg)

Fitted out with multiple IoT components, the device could explore the most intuitive way to extract and visualise IoT data. For Telstra Labs, the goal was to discover an Extended Reality (XR) experience that could contextualise the pump, as well as data collected from the pump’s sensors, in any environment.

For us here at PHORIA, it was a chance to do what we love — to help create deeper connections between people, their devices and their spaces. The central question we had to answer was this: how do we make a data-driven experience human? How do we connect people with the devices working around them, in this case a water pump?

Our collaboration set out to answer this question with our AR IoT connected experience — visAR.

Forging ahead into the Datasphere

What we found with the IoT data coming from the water pump was this: it’s complex. It’s constant. But if we can wrangle it, it can be extremely useful in providing detailed snapshots into the status and performance of the device or space that it is monitoring.

So to make these detailed snapshots human centric, we decided to present the pump’s IoT data in a digital manner using AR. This meant using the major features of the pump, such as the tank and motor, as virtual points of interest (POIs) to anchor our live IoT data visualisation. The context created by positioning the live IoT data layer over the original IoT sensors, as opposed to generating a list of values, makes for a much more intuitive experience.

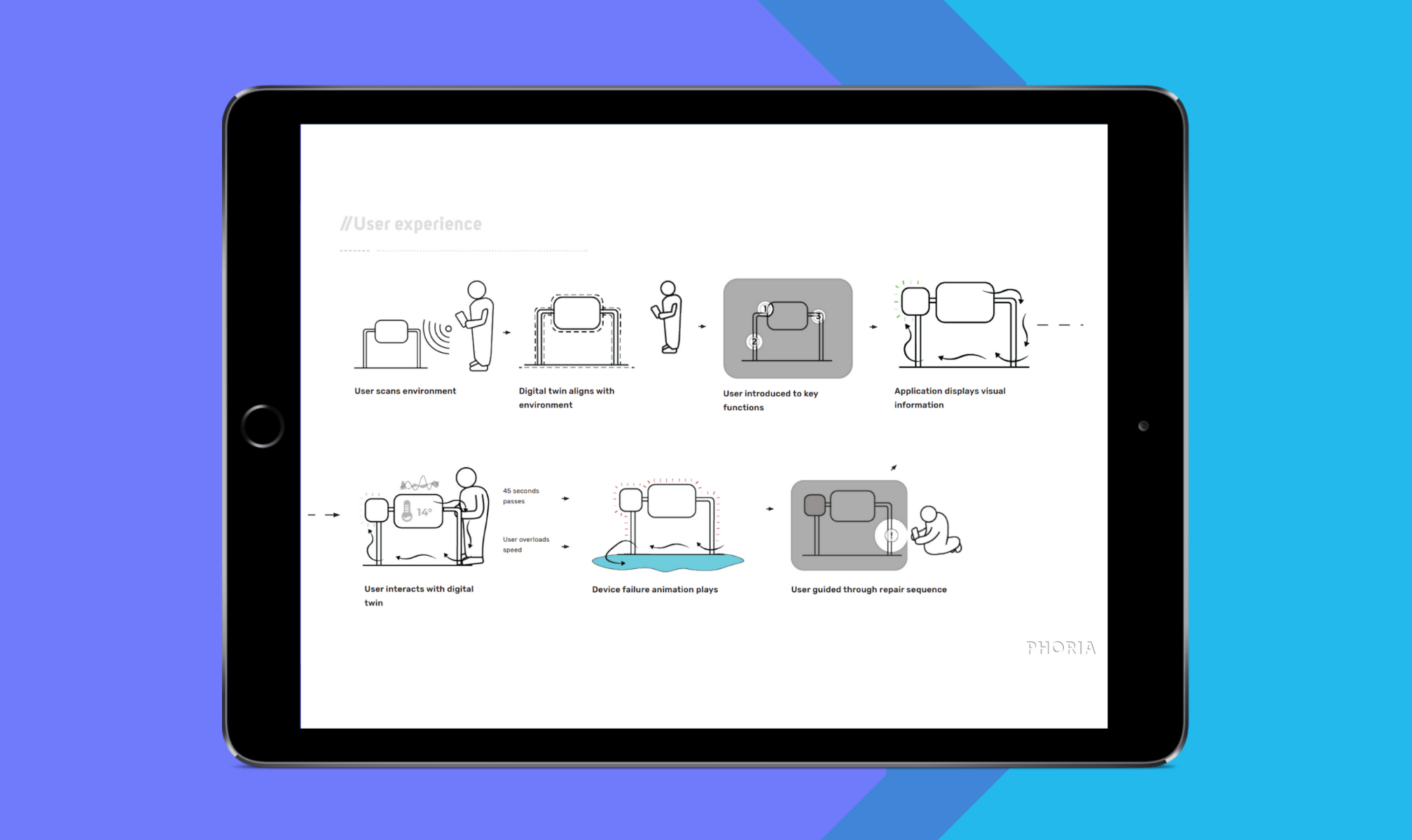

The first task on the way to VisAR was to give the experience some spatial context. An experience like this should always understand where the user and device are in space, and needs to recognise what it is looking at.

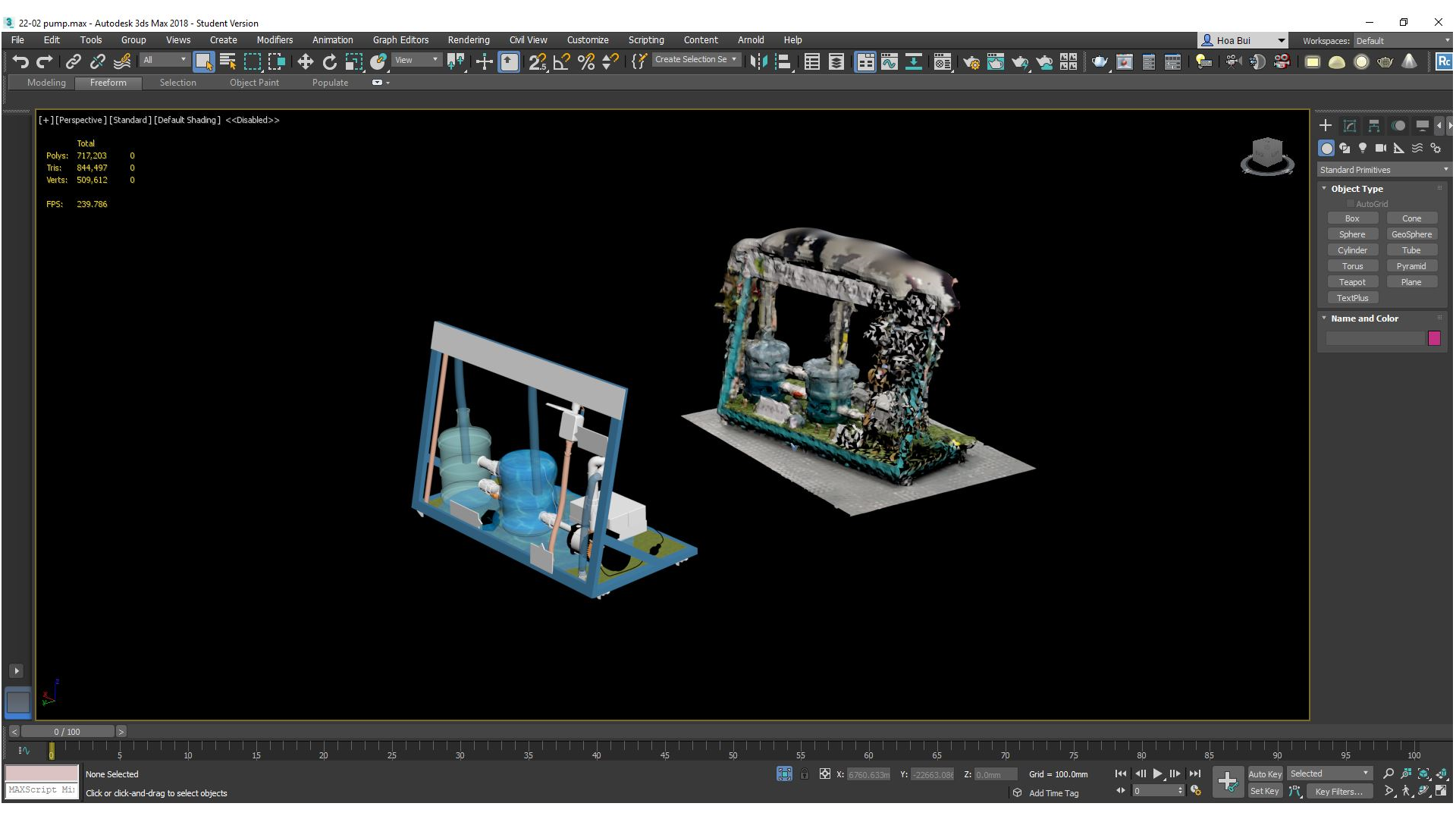

This where the concept of “digital twins” comes into play. We had our digital artist create a 1:1 digital twin of the water pump in 3Ds Max from a 3D scan made of the pump.

To create the spatial context needed, VisAR combined the real world with the digital one. This meant using AR to align the digital pump with the real pump, generating a virtual layer that could host live IoT data.

For those who might not be aware, “alignment” is perhaps the most important aspect of a digital twin. You need the real and virtual worlds to sync, so you can accurately layer the digital representation over the real object. It’s no good if your virtual pump is out in the carpark, when the real one you’re looking at is down in the basement.

The benefit of alignment is the ability to add accurately positioned, spatially contextualised, live IoT data to the virtual environment. Plus, it also opens the door for us developers to use spatial animations and visual effects. Who doesn’t love that?

True alignment was precisely what we wanted for the VisAR experience.

Pencils before pixels

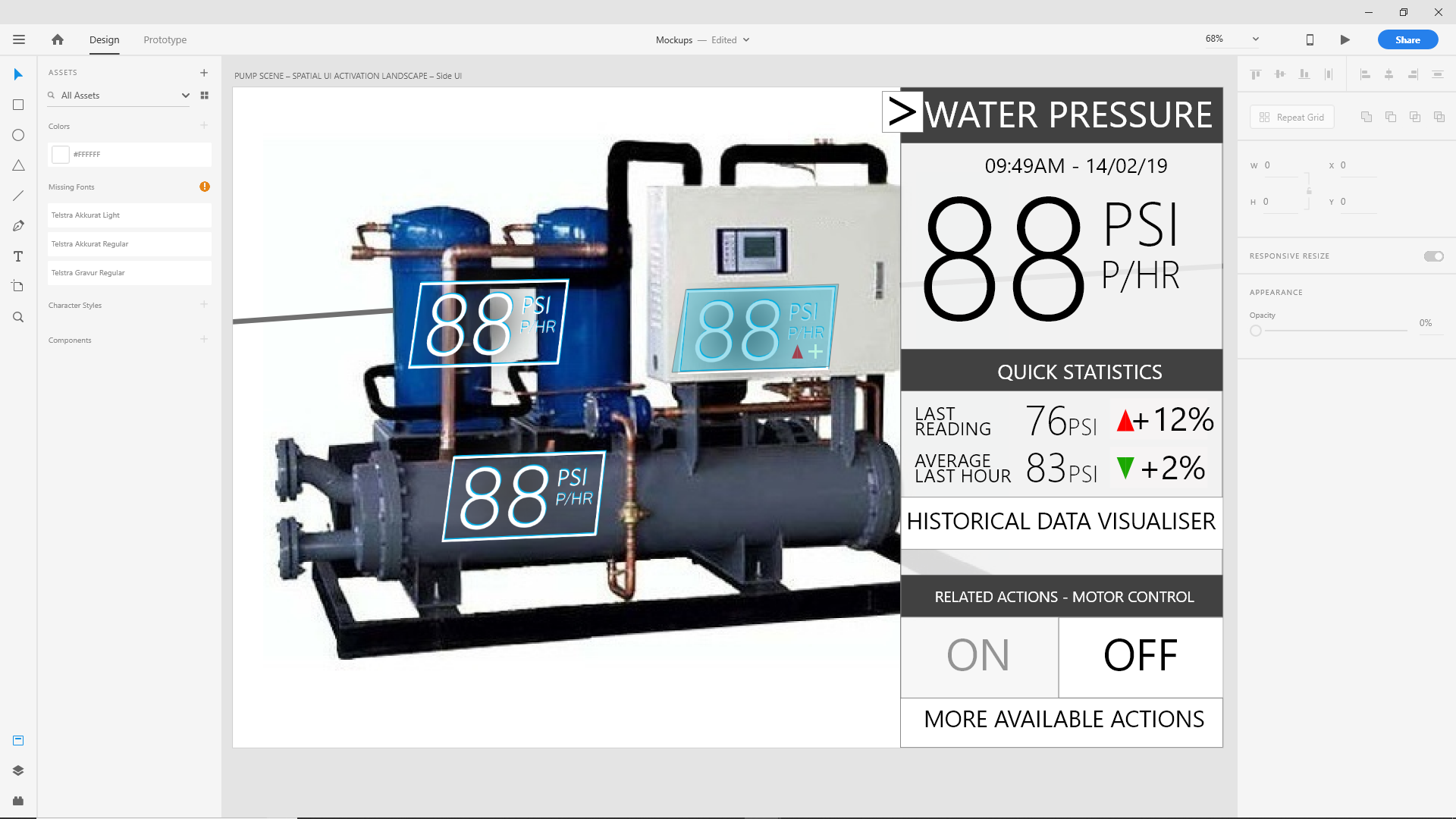

Our design phase started with sketches of how we want the data to be represented. We could visualise this data any way we wanted, but it needed to be intuitive. It needed to help temper the complex nature of live IoT sensor data. Our spatial designers took on the challenge with these core principles in mind. They sketched the pump with various possible locations for data visualisation, and bounced around ideas for how the spatial UI components might look. We needed to fully test out our concepts, before moving on the the “mockup” stage in Adobe XD — a tool used for designing and prototyping user experience for web and mobile apps.

/https%3A%2F%2Fmiro.medium.com%2Fmax%2F3840%2F1*IB97kXaKrHpeGrCMKyFUQA.jpeg)

Once we locked down our favourite UI concepts, we used XD to simulate what the proposed UI components might look like in the final experience.

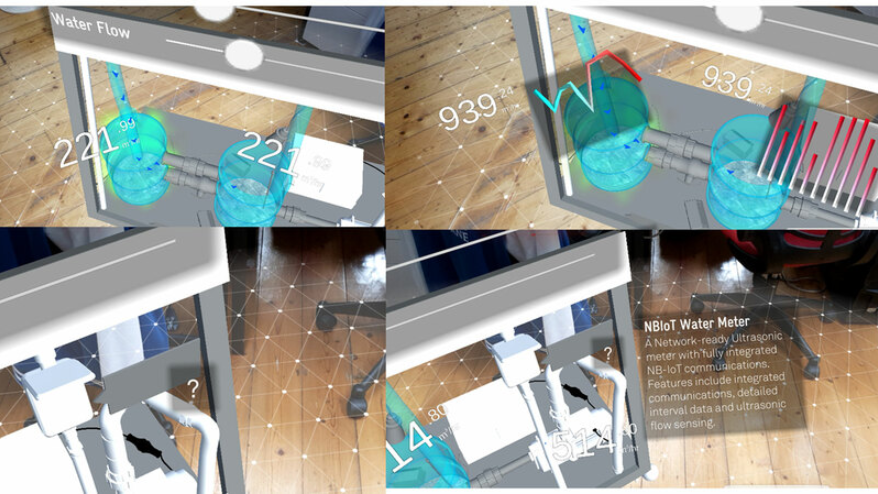

Below are a few spatial UI design concepts the team produced. IoT sensor data, even text descriptions of the IoT device, are shown spatially.

Finding the right balance between essential information and spatial congestion was a challenge to the team.

Setting the scene

We almost exclusively use Unity to build these kind of spatial experiences. The ability to rapidly develop prototypes and the fantastic integration with industry standard AR SDKs, like ARCore and ARKit, makes it the best choice for these kinds of projects.

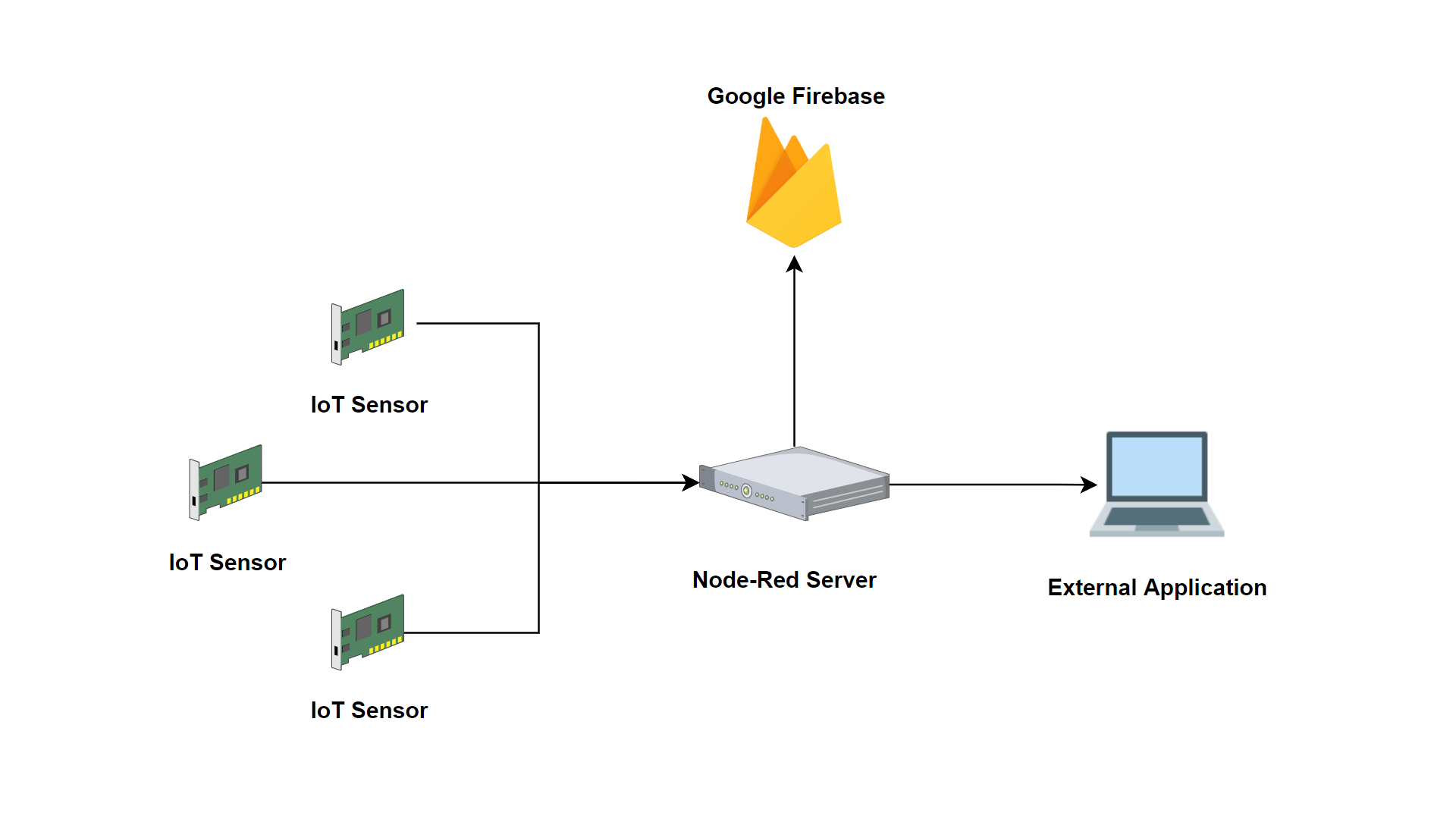

But while we developed the spatial UI with Unity, we also needed to work on getting the IoT sensors and our spatial UI components on speaking terms. This meant finding a way to open the IoT floodgates, to get the internal data from these sensors to communicate with external applications, like VisAR.

What we needed was a middle man. Telstra Labs set up the pump’s IoT sensors to send their live data to a system called Node-RED, which is designed to capture and make IoT data available for external use — namely our experience. We did the rest, building a bridge between Node-RED and Unity so we could draw from the flow of live data values. Once we had the data flowing, we still needed a way to store the values. So we added a Google Firebase connection to store the values as they were recovered from the sensors. This gave us the ability to retrieve specific historical data for any of the pump’s sensors — and having this historical data on hand is very useful. For VisAR, it would mean users could form insights about the performance of their water pump over time and ultimately make more informed decisions.

For example, a user could foresee and act on potential issues with the device. A downturn in the performance data for a specific part of the water pump could point to an imminent problem with that component and ultimately help the user take pre-emptive action.

Gagan Singh, an expert Tech Strategist from Telstra Labs, talks more about how this process works:

“Our prototype of the connected industrial pump enables an intuitive interface based upon digital twin technology, that allows the users to monitor and operate the device remotely over Telstra’s network. In case of fault, the user can also reach out to a remote expert for support and follow augmented instructions to rectify the relevant fault. This can reduce the downtime and drive efficiency in various industrial applications.”

Armed with a live feed of IoT data, we were able to connect this data with the spatial UI components in our digital twin. We even added interactions with these components to show secondary IoT information, in the form of charts and text descriptions.

The possibilities extend even further than spatially positioned numbers and charts in a digital space. As we gain access to IoT data around the speed of the pump motor and the water level of the tank, more information can be visualised.

Normally, you wouldn’t be able to see the water rushing through the pipes or how full the tank might be. But an aligned digital twin of the pump gives us the ability to visualise processes that are usually hidden.

Our digital artist prepared realistic water animations to reflect the amount of water in the tank, the directional flow and speed of the water travelling through the pipes. Our spatial developers took these animations and made them reactive and dynamic, so the digital water mimicked the IoT data collected from the sensors on the tank and motor.

/https%3A%2F%2Fmiro.medium.com%2Fmax%2F1600%2F1*Pl_BGbjzi_ugFmpXblDaXA.gif)

/https%3A%2F%2Fmiro.medium.com%2Fmax%2F1600%2F1*pJZwuvUtmnloX_Njq6Ko6g.gif)

Does this spatial UI bring me joy…?

We took the prototype to Telstra Labs and after rounds of testing with our spatial team, one theme emerged — people felt the interface was cluttered with spatial information competing for their attention. Though they found the visualisation of the spatial data helpful, there was too much going on in the virtual space at once.

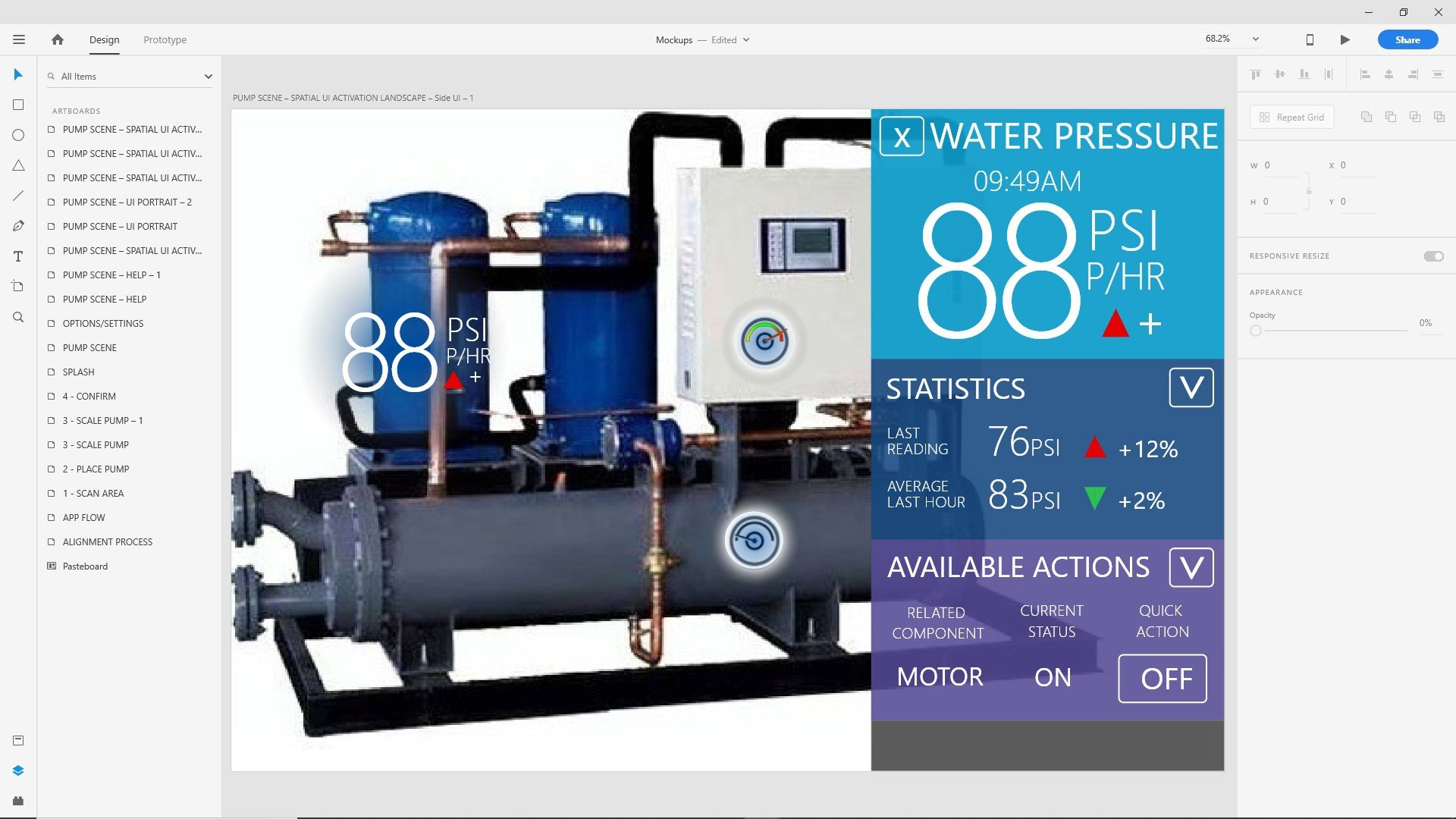

When designing for a digital spatial environment, it makes sense to select 3D user interface components that can be positioned in digital space. However after client feedback, we looked at decluttering the interface by experimenting with 2D user interface components.

The new designs shifted some secondary data clutter to a 2D screen overlay, only shown when the user interacted with the relevant spatial UI component. This meant that only the most relevant data was represented spatially.

And once we overhauled the visual identity of the experience to give it a more polished feel, we had our final product.

The final spatial interface

/https%3A%2F%2Fmiro.medium.com%2Fmax%2F3840%2F1*h2M7cQhoDl_MzAIVUxTiew.jpeg)

What’s next for the converted futurists?

VisAR could be a game-changer. A spatial data interface that connects people, data and spaces will revolutionise the manufacturing and construction industries. It will be the foundation for all smart environments, for smart cities of the future. No one will need to waste their time trying to interpret and untangle complex IoT data. We’ll have purpose built experiences that complement this IoT data and put us back in the driver’s seat, so we can easily manage the devices that our businesses — and lives — rely on.

We’ll be able to intuitively understand the status or performance of any connected device, anywhere, instantly. And it won’t just be limited to iPads or smartphones. We’ll be able to access these experiences through XR wearables, like Magic Leap.

So let’s keep exploring this connection between people, data and spaces. Let’s keep building experiences, prototypes, best practise tutorials. Whatever it takes to get to a connected future. Because that’s a future I for one am looking forward to.

All Rights Reserved for Jesse Harrison