It’s the final frontier in artificial intelligence. It’s the star of countless films and novels, both the greatest villain and hero of modern-day fantasies. I’m talking about true “intelligent” machines, sometimes called “hard” AI, or “general intelligence”. That is, an artificial intelligence that is intelligent “like us”, that is “conscious” or “self aware”.

This is a subject more discussed by philosophers and pop-culture commentators than by actual researchers in artificial intelligence. Its terms are too loosely-defined, its consequences of too little practical significance to interest most data scientists or software engineers. Philosophers and alarmist journalists might concern themselves with the line between simple maths and a thinking being, but for practical purposes, the current state-of-the-art in AI seems so far away from any truly intelligent machine as to make the question moot.

But I think that is a missed opportunity. A good understanding of the actual practice of artificial intelligence brings an entirely different, and more useful perspective to the question. Indeed, my belief is that far from being a distant concern or a hypothetical, questions about how we interact with intelligent machines are relevant right now, and apply to systems we already encounter every day.

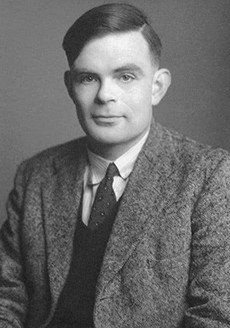

A discussion of the intelligence of computers must start with what is, without a doubt, the defining work on the subject: Alan Turing’s “Computing Machinery and Intelligence”. In this paper, he first proposes what will become one of the defining tropes in discussions of “hard” AI, or computers that think like people. It’s what he calls “The Imitation Game”, and what has become known as “The Turing Test”. I’ll let him explain it:

“…the problem can be described in terms of a game which we call the ‘imitation game.” It is played with three people, a man ‘A’, a woman ‘B’, and an interrogator ‘C’, who may be of either sex. The interrogator stays in a room apart from the other two. The object of the game for the interrogator is to determine which of the other two is the man and which is the woman. He knows them by labels X and Y, and at the end of the game he says either “X is A and Y is B” or “X is B and Y is A.” The interrogator is allowed to put questions to A and B… …We now ask the question, “What will happen when a machine takes the part of A in this game?”

This crystallises the problem of computer intelligence. Supposing our hidden interlocutor was a computer, and not a person, how would we, as the interrogator, know? What differences would we expect to see? Can we imagine any question or series of questions which would definitively distinguish between a flesh-and-blood, conscious human being, and a plastic-and-wires, unconscious machine?

Turing did not intend this as a practical benchmark, though it has often been misinterpreted as such. Turing is not implying that a machine that can fool a human is “intelligent”, and a machine that cannot pass as human is not. That’s an asinine interpretation of a much more sophisticated argument. What Turing describes is a startling insight into the nature of the problem of intelligence. Turing tells us that the distinction between thinking and not, between consciousness and somnambulance, lies not with the machine, but with the person perceiving it. In other words, as we have learnt from recent advances in image generation, faking it is the same as actually doing it. What Turing tells us is that it doesn’t matter whether the machine is thinking or not. What matters is whether we treat it as if it is.

Let me explain.

Every artificial intelligence algorithm, from the simplest classifier, to the most sophisticated deep-learning system, works on a similar principle. Given a set of data, a collection of observations about the world, the algorithm attempts to construct a set of rules — a model — which explains those observations. For simple algorithms, those models are extremely simple: “A dinosaur with feathers is this much more likely to be a carnivore”, “A monarch who takes the throne after the age of 14 rules for this many more years.” For complex algorithms, the models are much harder to explain — an image recognition algorithm (for example, to identify medieval weaponry) constructs an abstract representation of the image it is shown. To human observers, that representation is entirely opaque, but to the algorithm it contains all the information it needs to distinguish glaive from halberd, bardiche from bec-de-corbin. An algorithm that creates descriptions of novel hamburgers references a vast body of parameters to decide whether to feed us a “Beef burger with cured pork” or a “Beef burger with cured cancer”. But in all cases, theses algorithms work towards the same outcome: to minimise surprise. The right answer is the one that renders the world a little less baffling, a little more secure.

In this, these algorithms are akin to human beings. I’m no expert on human cognition, but it’s easy to imagine human thought the same way. Watch a baby’s delight at a game of “peek-a-boo”, and you can see the process happen before your eyes: “A face! Delightful! But what’s this? The face is gone! What has happened? Where can it be? Oh there it is! Amazing!” A baby is endlessly amused by this process, seemingly no matter how often it is repeated. But what is delightful to them is not complete surprise, but rather the fulfilment of an expectation — the baby’s joy grows as they learn the rules of the game. The delight is not in being surprised, when the face disappears, but in having their expectations confirmed when it returns. It is probably overly simplistic to say that this process is the underpinning of all human thought, but it’s clear that, at a very deep level, our thinkingis very deeply tied to the process of predicting.

Just like a machine learning algorithm, we construct models to explain the world around us. Some of these are very simple models. The baby playing peek-a-boo is learning one of the most fundamental of these models: object permanence, the idea that objects in the world continue to exist, even if we cannot immediately perceive them. This model is enormously useful in helping us make sense of the world around us. We close our eyes, and we are not startled when we reopen them to find the world roughly the same as we left it. We put something down, walk away, and when we need it again, we come back to the same spot to find it. This expectation is so essential to our experience of the world that it is easy to forget that it is a mental model — a construct of our minds. We continue to believe in objects’ existence outside our perception not because we have any direct evidence for this, but because it is enormously useful for us to do so.

There is another fundamental model in human thought: theory of mind. This is the ability to explain the actions of another being (or of yourself) by reference to the existence of hidden mental states — beliefs, emotions, intent, knowledge. This model allows us to make sense of complex behaviour by turning it into a kind of narrative. Intelligence is a story we tell ourselves to explain unpredictable behaviour.

Perhaps the most sophisticated models humans create are what we know as narratives. A plot. A description of a series of events, tied together by some connective tissue of cause and effect, some sense of purpose and internal consistency — a deeper meaning. The canonical example comes from E. M. Forster’s “Aspects of the Novel”.

“The king died and then the queen died” is a story.’ But ‘“the king died and then the queen died of grief” is a plot.”

In other words, a series of events by itself is just meaningless noise. What transforms them into something meaningful is some sense of causality, of inevitability — of predictability.

I’ve spent the last nine months exploring many kinds of artificial intelligence, from the extremely simple to the extremely complex. I’ve built a model which can compress information from hundreds of thousands of data-points into a numeric measure of the meaning of a film. I’ve built a model which can paint novel works of art. But the model which came closest to feeling actually intelligent was probably the simplest of all of them — it simply guided the movement of small dots on a screen. The dots’ movement, chasing or fleeing, is governed by some simple rules — a few lines of code and some basic geometry. But to the observer what emerges is a complex world of personalities and stories. The chasing dot is friendly and eager. The fleeing dot is timid and shy. The friendly dot’s overtures towards the shy dot are doomed to be constantly rejected. These personalities and plots exist nowhere in the code. They exist only in the imagination of the person observing the game. In other words, their intelligence is not a property of the mind of dots, it is a property of the mind of the observer.

In systems theory, they use the word “emergence” to describe the phenomenon of complex behaviour arising from the interaction of many, much simpler systems. A common belief about a hypothetical “conscious” AI is that its consciousness must be an emergent property — that a sufficiently complex system, with enough processing power and enough input data will somehow “wake up” to consciousness. But I think this model of thought fundamentally misunderstands what intelligence is — it is not an intrinsic quality, but rather an observed one.

In other words, that magical “spark” that awakens in a complex system, that transforms it from a lifeless machine into a conscious being, is not the product of its ability to think, but rather it is the product of our ability to empathise. There’s no magical moment lying somewhere in the future, when a machine will cross some critical threshold of sophistication, and look back at us through the computer screen. That threshold exists inside all of us, and is crossed in small ways every day.

Every moment where our pattern-forming brain transforms a machine’s behaviour into a personality, that machine is, in a very real sense, alive to us. Every time we curse at an unreliable laptop, every time we silently thank our music player for making a great choice, every time we feel sympathy for a character in a video game, a small consciousness is briefly brought to life.

What an exciting world this way of thinking opens up to us! Every algorithm, from the simplest classifier to the most sophisticated image generator, is the product of a human idea, the encoding of human beliefs. They are not just tools, but disembodied thoughts, shards of intelligence acting in the world. When we interact with an algorithm — when we are shown a movie recommendation, when we are pre-approved for a bank loan, when we are scanned through a security gate — we are interacting with a small reflection of a human being, a tiny fragment of a mind. The data they are trained on, the features in that data that they consider, their parameters for success and failure, all reflect the thoughts and desires, the hopes and the values of the people who created them. Far from being an alien intruder, or a foreign threat, machines are simply a new vessel for carrying forward our own thoughts and feelings. In a very real sense, they are our children. The machines will not replace us, they are us.

And like our children, they carry on our prejudices. We have seen countless examples of algorithms which embed the assumptions of their creators. In one of my earliest essays on this subject I showed how, for example, my hubris in thinking I understood knitting lead to me creating a model which fundamentally misunderstood how to recommend knitting patterns. Our inability to empathise with machine intelligences lends them the veneer of impartiality. We accept from them unquestioningly judgements that we would never accept from a human being. Because we are blind to their humanness, we are blind to their fallibility.

Nine months ago when I started this series of essays, I wrote about the Industrial Revolution and the upheavals it caused. Similar upheavals are, I believe, likely in our future again. But what I’ve learned in the course of these essays, and what I’ve tried to argue in this essay, is that these are fundamentally caused by human factors — by human beliefs, human fears, and human hopes. These algorithms are sometimes complex and sometimes surprising, but they are never so surprising or so complex that we cannot come to understand, and to some extent predict their likely strengths and weaknesses.

I’m neither a pessimist nor an alarmist about the future of artificial intelligence and its impact on our world. I believe that we will all, in future, come to see these algorithms simply as extensions of the people and systems we already interact with every day. If they extend and amplify the power of some groups over us, then I hope they will also expand our capacity to resist that power. I hope that a greater understanding of these algorithms, their origins, and their potential, will help us to build a better future alongside them.

All Rights Reserved for Simon Carryer