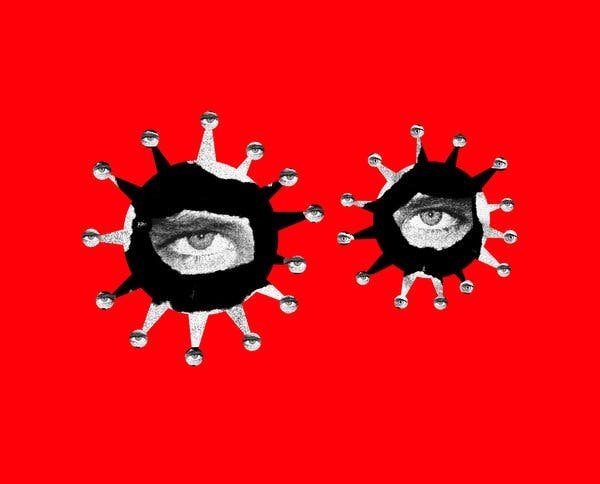

Thousands of internal directives and reports reveal how Chinese officials stage-managed what appeared online in the early days of the outbreak.

In the early hours of Feb. 7, China’s powerful internet censors experienced an unfamiliar and deeply unsettling sensation. They felt they were losing control.

The news was spreading quickly that Li Wenliang, a doctor who had warned about a strange new viral outbreak only to be threatened by the police and accused of peddling rumors, had died of Covid-19. Grief and fury coursed through social media. To people at home and abroad, Dr. Li’s death showed the terrible cost of the Chinese government’s instinct to suppress inconvenient information.

Yet China’s censors decided to double down. Warning of the “unprecedented challenge” Dr. Li’s passing had posed and the “butterfly effect” it may have set off, officials got to work suppressing the inconvenient news and reclaiming the narrative, according to confidential directives sent to local propaganda workers and news outlets.

They ordered news websites not to issue push notifications alerting readers to his death. They told social platforms to gradually remove his name from trending topics pages. And they activated legions of fake online commenters to flood social sites with distracting chatter, stressing the need for discretion: “As commenters fight to guide public opinion, they must conceal their identity, avoid crude patriotism and sarcastic praise, and be sleek and silent in achieving results.”

China’s censors issued special instructions to manage anger over Dr. Li’s death.

To news websites and social media platforms:

关于武汉市中心医院李文亮医生去世一事,各网站、新媒体要严格规范稿源,严禁使用自媒体稿件擅自报道,不得弹窗PUSH,不评论、不炒作。互动环节稳妥控制热度,不设话题,逐步撤出热搜,严管有害信息。

“… do not use push notifications, do not post commentary, do not stir up speculation. Safely control the fervor in online discussions, do not create hashtags, gradually remove from trending topics, strictly control harmful information.”

To local propaganda workers:

各区、县(市)网信办:根据2月7日省网信办视频例会精神,现就近期工作提示如下:一、准确把握网上舆情严峻复杂的形势日前,李文亮医生去世已迅速成为网络热点。我们要清醒认识到此事所引发的蝴蝶效应、破窗效应、雪球效应,对我们的网上舆论管控工作提出了前所未有的挑战。各地网信部门要高度关注网上舆情,对于严重损害党和政府公信力、矛头直指政治体制的,要坚决管控;在其他事情上对于宣泄性的要引导,注意方式方法。

“We must recognize with clear mind the butterfly effect, broken windows effect and snowball effect triggered by this event, and the unprecedented challenge that it has posed to our online opinion management and control work. All Cyberspace Administration bureaus must pay heightened attention to online opinion, and resolutely control anything that seriously damages party and government credibility and attacks the political system …”

The orders were among thousands of secret government directives and other documents that were reviewed by The New York Times and ProPublica. They lay bare in extraordinary detail the systems that helped the Chinese authorities shape online opinion during the pandemic.

At a time when digital media is deepening social divides in Western democracies, China is manipulating online discourse to enforce the Communist Party’s consensus. To stage-manage what appeared on the Chinese internet early this year, the authorities issued strict commands on the content and tone of news coverage, directed paid trolls to inundate social media with party-line blather and deployed security forces to muzzle unsanctioned voices.

Though China makes no secret of its belief in rigid internet controls, the documents convey just how much behind-the-scenes effort is involved in maintaining a tight grip. It takes an enormous bureaucracy, armies of people, specialized technology made by private contractors, the constant monitoring of digital news outlets and social media platforms — and, presumably, lots of money.

It is much more than simply flipping a switch to block certain unwelcome ideas, images or pieces of news.

China’s curbs on information about the outbreak started in early January, before the novel coronavirus had even been identified definitively, the documents show. When infections started spreading rapidly a few weeks later, the authorities clamped down on anything that cast China’s response in too “negative” a light.

The United States and other countries have for months accused China of trying to hide the extent of the outbreak in its early stages. It may never be clear whether a freer flow of information from China would have prevented the outbreak from morphing into a raging global health calamity. But the documents indicate that Chinese officials tried to steer the narrative not only to prevent panic and debunk damaging falsehoods domestically. They also wanted to make the virus look less severe — and the authorities more capable — as the rest of the world was watching.

The documents include more than 3,200 directives and 1,800 memos and other files from the offices of the country’s internet regulator, the Cyberspace Administration of China, in the eastern city of Hangzhou. They also include internal files and computer code from a Chinese company, Urun Big Data Services, that makes software used by local governments to monitor internet discussion and manage armies of online commenters.

The documents were shared with The Times and ProPublica by a hacker group that calls itself C.C.P. Unmasked, referring to the Chinese Communist Party. The Times and ProPublica independently verified the authenticity of many of the documents, some of which had been obtained separately by China Digital Times, a website that tracks Chinese internet controls.

The C.A.C. and Urun did not respond to requests for comment.

“China has a politically weaponized system of censorship; it is refined, organized, coordinated and supported by the state’s resources,” said Xiao Qiang, a research scientist at the School of Information at the University of California, Berkeley, and the founder of China Digital Times. “It’s not just for deleting something. They also have a powerful apparatus to construct a narrative and aim it at any target with huge scale.”

“This is a huge thing,” he added. “No other country has that.”

Controlling a Narrative

China’s top leader, Xi Jinping, created the Cyberspace Administration of China in 2014 to centralize the management of internet censorship and propaganda as well as other aspects of digital policy. Today, the agency reports to the Communist Party’s powerful Central Committee, a sign of its importance to the leadership.

The C.A.C.’s coronavirus controls began in the first week of January. An agency directive ordered news websites to use only government-published material and not to draw any parallels with the deadly SARS outbreak in China and elsewhere that began in 2002, even as the World Health Organization was noting the similarities.

At the start of February, a high-level meeting led by Mr. Xi called for tighter management of digital media, and the C.A.C.’s offices across the country swung into action. A directive in Zhejiang Province, whose capital is Hangzhou, said the agency should not only control the message within China, but also seek to “actively influence international opinion.”

Coronavirus Briefing: An informed guide to the global outbreak, with the latest developments and expert advice.

Agency workers began receiving links to virus-related articles that they were to promote on local news aggregators and social media. Directives specified which links should be featured on news sites’ home screens, how many hours they should remain online and even which headlines should appear in boldface.

Online reports should play up the heroic efforts by local medical workers dispatched to Wuhan, the Chinese city where the virus was first reported, as well as the vital contributions of Communist Party members, the agency’s orders said.

Headlines should steer clear of the words “incurable” and “fatal,” one directive said, “to avoid causing societal panic.” When covering restrictions on movement and travel, the word “lockdown” should not be used, said another. Multiple directives emphasized that “negative” news about the virus was not to be promoted.

When a prison officer in Zhejiang who lied about his travels caused an outbreak among the inmates, the C.A.C. asked local offices to monitor the case closely because it “could easily attract attention from overseas.”

Officials ordered the news media to downplay the crisis.

To all news websites:

关于新型冠状病毒感染的肺炎疫情,各网站、新媒体在报道确诊病例、死亡病例情况时,要注意保护隐私、不点名道姓,尽量避免使用患者真实照片和影像,可适当进行技术处理。要规范信息来源,避免使用“医生认为”之类表述,尽量指出具体信息来源。不使用“无法治愈”“致命”等标题,防止引起社会恐慌。

“Do not use ‘incurable,’ ‘fatal’ or similar headlines to avoid causing societal panic.”

1、各媒体对有关部门处理不担当不作为干部和阻碍疫情防控等通报个案的报道要适度,原则上不集纳。2、各媒体在报道限制出行、受控出入等防控举措时,不使用封城、封路、封门、封条等提法。3、对基层管控措施落实情况的舆论监督要适度,原则上通过内部渠道反映。4、关于治疗新型冠状病毒药物研究进展情况,要依据国家卫健部门发布的权威信息进行报道,对尚未投入临床使用的药物其疗效要审慎把握,不随意转载网上消息。

“… when reporting on limits on travel, controls on movement and other prevention and control measures, do not use formulations like lockdown, road closures, sealed doors or paper seals.”

To all news websites and apps:

关于“新冠肺炎疫情”防控问题的负面新闻报道,各网站及客户端,和其他各类新媒体新应用平台,一律不得弹窗推送。如确需报道,只采用人民日报、新华社、央视以及卫健委、外交部等相关部门,湖北省及武汉市相关部门的权威信息。我办将加大力度巡查督查,如有发现违规弹窗推送行为,立即严肃处理。以上指令内容,务请落实属地责任和主体责任,迅速落实并严格保密。

“Do not use pop-up notifications … for any negative news reports about the prevention and control of the ‘novel coronavirus epidemic.’”

News outlets were told not to play up reports on donations and purchases of medical supplies from abroad. The concern, according to agency directives, was that such reports could cause a backlash overseas and disrupt China’s procurement efforts, which were pulling in vast amounts of personal protective equipment as the virus spread abroad.

“Avoid giving the false impression that our fight against the epidemic relies on foreign donations,” one directive said.

C.A.C. workers flagged some on-the-ground videos for purging, including several that appear to show bodies exposed in public places. Other clips that were flagged appear to show people yelling angrily inside a hospital, workers hauling a corpse out of an apartment and a quarantined child crying for her mother. The videos’ authenticity could not be confirmed.

The agency asked local branches to craft ideas for “fun at home” content to “ease the anxieties of web users.” In one Hangzhou district, workers described a “witty and humorous” guitar ditty they had promoted. It went, “I never thought it would be true to say: To support your country, just sleep all day.”

Then came a bigger test.

‘Severe Crackdown’

Dr. Li’s death in Wuhan loosed a geyser of emotion that threatened to tear Chinese social media out from under the C.A.C.’s control.

It did not help when the agency’s gag order leaked onto Weibo, a popular Twitter-like platform, fueling further anger. Thousands of people flooded Dr. Li’s Weibo account with comments.

The agency had little choice but to permit expressions of grief, though only to a point. If anyone was sensationalizing the story to generate online traffic, their account should be dealt with “severely,” one directive said.

The day after Dr. Li’s death, a directive included a sample of material that was deemed to be “taking advantage of this incident to stir up public opinion”: It was a video interview in which Dr. Li’s mother reminisces tearfully about her son.

The scrutiny did not let up in the days that followed. “Pay particular attention to posts with pictures of candles, people wearing masks, an entirely black image or other efforts to escalate or hype the incident,” read an agency directive to local offices.

Larger numbers of online memorials began to disappear. The police detained several people who formed groups to archive deleted posts.

In Hangzhou, propaganda workers on round-the-clock shifts wrote up reports describing how they were ensuring people saw nothing that contradicted the soothing message from the Communist Party: that it had the virus firmly under control.

Officials in one district reported that workers in their employ had posted online comments that were read more than 40,000 times, “effectively eliminating city residents’ panic.” Workers in another county boasted of their “severe crackdown” on what they called rumors: 16 people had been investigated by the police, 14 given warnings and two detained. One district said it had 1,500 “cybersoldiers” monitoring closed chat groups on WeChat, the popular social app.

Researchers have estimated that hundreds of thousands of people in China work part-time to post comments and share content that reinforces state ideology. Many of them are low-level employees at government departments and party organizations. Universities have recruited students and teachers for the task. Local governments have held training sessions for them.

Local officials turned to informants and trolls to control opinion.

Xiaoshan District, Feb. 12

加强网军统一指挥,组织全区网评员实时待命,及时开展舆论引导。强化网络正能量推送及疫情防控科普工作,组织区级媒体、网评员撰写、转发正能量推文400余则,开展防疫科普宣传100余次,三是加强舆论引导,凝聚网络共识。加强网军统一指挥,组织全区网评员实时待命,及时开展舆论引导。强化网络正能量推送及疫情防控科普工作,组织区级媒体、网评员撰写、转发正能量推文400余则,开展防疫科普宣传100余次,发动网评员跟评、引导4万余人次,有效消除市民恐慌心理,提振防控信心,为打赢疫情防控阻击战营造良好的舆论氛围。

“Mobilized online commenters to comment and guide more than 40,000 times, effectively eliminating city residents’ panic, boosting confidence in prevention and control efforts, and creating a good atmosphere of public opinion for winning the battle against the epidemic.”

Tonglu County, Feb. 13

通过重点加强对论坛、微博、微信、移动新闻客户端等网上互动区域的实时监测,确保及时发现、研判、处置重要舆情及各类谣言等有害信息。此外,积极发挥全县网评员和属地瞭望哨信息员作用,重点加强对朋友圈、微信群等封闭、半封闭网络平台的信息监测,为打击网络谣言和正面宣传引导搜集基础素材。针对网上发现的谣言信息,加强与涉事区域和涉事单位的沟通,并会同公安机关严厉打击。截止2月13日,我县共发布辟谣信息15条,转载辟谣信息62条,由公安机关落地查人16人,教育告诫14人,行政拘留2人,传谣造谣当事人自行删除不实信息20余条,组织网评员转发辟谣信息6000余条,及时解疑释惑,回应网民关切。

“As of Feb. 13, our county published 15 rumor-debunking posts, reposted 62 rumor-debunking posts, 16 people were investigated by public security organs, 14 people were educated and admonished, two people were put in administrative detention …”

Fuyang District, early February

及时处置防疫工作中涉确诊数量、夸大疫情影响、人员感染隔离等谣言信息。防疫期间,与公安网警共同依法落地查处28人次,官方平台发布和转载辟谣信息56条。避免不实信息蔓延造成公众恐慌,消解防疫工作成效。联动网军、自媒体、舆情监测服务公司力量,捕捉敏感线索,确保舆情发现早。发动全区1500余名网军力量,及时上报微信群等半封闭圈子舆情信息。发挥区自媒体联盟作用,借助其爆料通道收集一手线索。与中青舆情监测服务公司保持密切联系,加大软件巡查频次,精准设置关键字,提升灵敏度。

“Mobilized the force of more than 1,500 cybersoldiers across the district to promptly report information about public opinion in WeChat groups and other semiprivate chat circles.”

Engineers of the Troll

Government departments in China have a variety of specialized software at their disposal to shape what the public sees online.

One maker of such software, Urun, has won at least two dozen contracts with local agencies and state-owned enterprises since 2016, government procurement records show. According to an analysis of computer code and documents from Urun, the company’s products can track online trends, coordinate censorship activity and manage fake social media accounts for posting comments.

One Urun software system gives government workers a slick, easy-to-use interface for quickly adding likes to posts. Managers can use the system to assign specific tasks to commenters. The software can also track how many tasks a commenter has completed and how much that person should be paid.

According to one document describing the software, commenters in the southern city of Guangzhou are paid $25 for an original post longer than 400 characters. Flagging a negative comment for deletion earns them 40 cents. Reposts are worth one cent apiece.

Urun makes a smartphone app that streamlines their work. They receive tasks within the app, post the requisite comments from their personal social media accounts, then upload a screenshot, ostensibly to certify that the task was completed.

The company also makes video game-like software that helps train commenters, documents show. The software splits a group of users into two teams, one red and one blue, and pits them against each other to see which can produce more popular posts.

Other Urun code is designed to monitor Chinese social media for “harmful information.” Workers can use keywords to find posts that mention sensitive topics, such as “incidents involving leadership” or “national political affairs.” They can also manually tag posts for further review.

In Hangzhou, officials appear to have used Urun software to scan the Chinese internet for keywords like “virus” and “pneumonia” in conjunction with place names, according to company data.

A Great Sea of Placidity

A memorial to Dr. Li Wenliang carved into the snow of a Beijing riverbank in February. Dr. Li, whose warnings about the severity of the coronavirus were silenced, died from Covid-19.CHINATOPIX, via Associated Press

By the end of February, the emotional wallop of Dr. Li’s death seemed to be fading. C.A.C. workers around Hangzhou continued to scan the internet for anything that might perturb the great sea of placidity.

One city district noted that web users were worried about how their neighborhoods were handling the trash left by people who were returning from out of town and potentially carrying the virus. Another district observed concerns about whether schools were taking adequate safety measures as students returned.

On March 12, the agency’s Hangzhou office issued a memo to all branches about new national rules for internet platforms. Local offices should set up special teams for conducting daily inspections of local websites, the memo said. Those found to have violations should be “promptly supervised and rectified.”

The Hangzhou C.A.C. had already been keeping a quarterly scorecard for evaluating how well local platforms were managing their content. Each site started the quarter with 100 points. Points were deducted for failing to adequately police posts or comments. Points might also be added for standout performances.

In the first quarter of 2020, two local websites lost 10 points each for “publishing illegal information related to the epidemic,” that quarter’s score report said. A government portal received an extra two points for “participating actively in opinion guidance” during the outbreak.

Over time, the C.A.C. offices’ reports returned to monitoring topics unrelated to the virus: noisy construction projects keeping people awake at night, heavy rains causing flooding in a train station.

Then, in late May, the offices received startling news: Confidential public-opinion analysis reports had somehow been published online. The agency ordered offices to purge internal reports — particularly, it said, those analyzing sentiment surrounding the epidemic.

The offices wrote back in their usual dry bureaucratese, vowing to “prevent such data from leaking out on the internet and causing a serious adverse impact to society.”

All Rights Reserved for Raymond Zhong, Paul Mozur, Jeff Kao and Aaron Krolik