Because benevolent bots are suckers. Plus, racism in medical journals, the sperm-count “crisis” and more in the Friday edition of the Science Times newsletter.

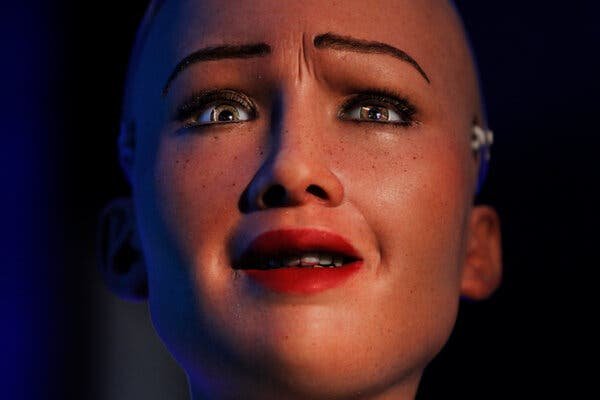

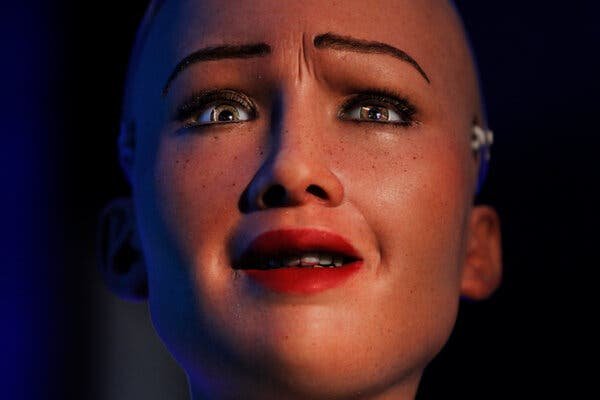

Artificial intelligence is gradually catching up to ours. A.I. algorithms can now consistently beat us at chess, poker and multiplayer video games, generate images of human faces indistinguishable from real ones, write news articles (not this one!) and even love stories, and drive cars better than most teenagers do.

But A.I. isn’t perfect, yet, if Woebot is any indicator. Woebot, as Karen Brown wrote this week in Science Times, is an A.I.-powered smartphone app that aims to provide low-cost counseling, using dialogue to guide users through the basic techniques of cognitive-behavioral therapy. But many psychologists doubt whether an A.I. algorithm can ever express the kind of empathy required to make interpersonal therapy work.

“These apps really shortchange the essential ingredient that — mounds of evidence show — is what helps in therapy, which is the therapeutic relationship,” Linda Michaels, a Chicago-based therapist who is co-chair of the Psychotherapy Action Network, a professional group, told The Times.

Empathy, of course, is a two-way street, and we humans don’t exhibit a whole lot more of it for bots than bots do for us. Numerous studies have found that when people are placed in a situation where they can cooperate with a benevolent A.I., they are less likely to do so than if the bot were an actual person.

“There seems to be something missing regarding reciprocity,” Ophelia Deroy, a philosopher at Ludwig Maximilian University, in Munich, told me. “We basically would treat a perfect stranger better than A.I.”

In a recent study, Dr. Deroy and her neuroscientist colleagues set out to understand why that is. The researchers paired human subjects with unseen partners, sometimes human and sometimes A.I.; each pair then played one in an array of classic economic games — Trust, Prisoner’s Dilemma, Chicken and Stag Hunt, as well as one they created called Reciprocity — designed to gauge and reward cooperativeness.

Our lack of reciprocity toward A.I. is commonly assumed to reflect a lack of trust. It’s hyper-rational and unfeeling, after all, surely just out for itself, unlikely to cooperate, so why should we? Dr. Deroy and her colleagues reached a different and perhaps less comforting conclusion. Their study found that people were less likely to cooperate with a bot even when the bot was keen to cooperate. It’s not that we don’t trust the bot, it’s that we do: The bot is guaranteed benevolent, a capital-S sucker, so we exploit it.

That conclusion was borne out by reports afterward from the study’s participants. “Not only did they tend to not reciprocate the cooperative intentions of the artificial agents,” Dr. Deroy said, “but when they basically betrayed the trust of the bot, they didn’t report guilt, whereas with humans they did.” She added, “You can just ignore the bot and there is no feeling that you have broken any mutual obligation.”

This could have real-world implications. When we think about A.I., we tend to think about the Alexas and Siris of our future world, with whom we might form some sort of faux-intimate relationship. But most of our interactions will be one-time, often wordless encounters. Imagine driving on the highway, and a car wants to merge in front of you. If you notice that the car is driverless, you’ll be far less likely to let it in. And if the A.I. doesn’t account for your bad behavior, an accident could ensue.

“What sustains cooperation in society at any scale is the establishment of certain norms,” Dr. Deroy said. “The social function of guilt is exactly to make people follow social norms that lead them to make compromises, to cooperate with others. And we have not evolved to have social or moral norms for non-sentient creatures and bots.”

That, of course, is half the premise of “Westworld.” (To my surprise Dr. Deroy had not heard of the HBO series.) But a landscape free of guilt could have consequences, she noted: “We are creatures of habit. So what guarantees that the behavior that gets repeated, and where you show less politeness, less moral obligation, less cooperativeness, will not color and contaminate the rest of your behavior when you interact with another human?”

There are similar consequences for A.I., too. “If people treat them badly, they’re programed to learn from what they experience,” she said. “An A.I. that was put on the road and programmed to be benevolent should start to be not that kind to humans, because otherwise it will be stuck in traffic forever.” (That’s the other half of the premise of “Westworld,” basically.)

There we have it: The true Turing test is road rage. When a self-driving car starts honking wildly from behind because you cut it off, you’ll know that humanity has reached the pinnacle of achievement. By then, hopefully, A.I therapy will be sophisticated enough to help driverless cars solve their anger-management issues.

All Rights Reserved for Alan Burdick