A British court ruled that teenager Molly Russell died in part because of online content—but holding platforms accountable is complicated.

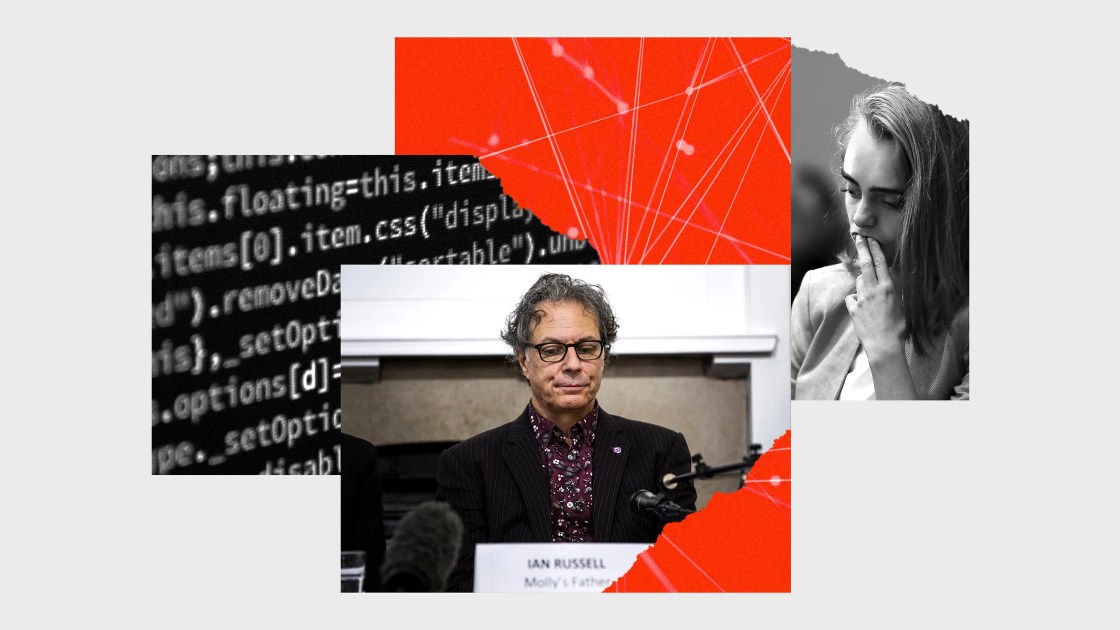

When 14-year-old Molly Russell died in 2017, her cell phone contained graphic images of self-harm, an email roundup of “depression pins you might like,” and advice on concealing mental illness from loved ones. Investigators initially ruled the British teen’s death a suicide. But almost five years later, a British coroner’s court has reversed the findings. Now, they claim that Russell died “from an act of self-harm while suffering from depression and the negative effects of online content”—and the algorithms themselves are on notice.

This isn’t the first time time that technology and suicide have collided in high-profile cases that push the boundaries of both science and law. And it certainly won’t be the last. A growing body of research suggests that social media platforms play a role in depression, body image issues, and other mental health challenges among users. While most cases to date have focused on the individuals who use platforms to cyberbully others, the inquiry into Russell’s death marked “perhaps the first time anywhere that internet companies have been legally blamed for a suicide,” according to The New York Times.

Yet the British ruling does not necessarily mean that social media companies are being held accountable. For one, a coroner’s court cannot exact punishment; Meta and Pinterest executives were compelled to testify, but no one is paying up, let alone going to prison. More importantly, the available research linking mental health problems and social media platforms mostly hinges on associations—X and Y both changed, but whether one caused the other is hard to say. Attributing definitive responsibility for social media-linked suicides remains meaningfully out of reach.

After all, Molly Russell isn’t the only person whose cause-of-death determination could use some court-mandated nuance. Do 6.5 million people die each year of air pollution, or of fossil capital? Are heart attacks the leading cause of death in the United States, or is the cycle of poverty? For that matter, what suicide isn’tan act of self-harm punctuating a long series of related struggles?

How we answer these questions matters. But in the US, the future of such cases rests on the willingness of judges and juries to wrestle with ever-longer chains of causation. It will also force an uncomfortable choice for legislators and their constituents: Take a bold leap past the existing science of suicide, or impatiently wait for new results.

In case it needs to be said: Encouraging suicide sucks, whether it comes from a school bully, an anonymous account, or your newsfeed. But that doesn’t make it illegal.

In 2006, a Missouri mom named Lori Drew and her employee created a fake MySpace account to pose as a teenage boy she named Josh Evans. Drew used the account to talk to her 13-year-old neighbor, Megan Meier. Drew believed that Meier had spread rumors about Drew’s own teenage daughter, Sarah. The messages began flirtatiously, but eventually “Josh Evans” allegedly told Meier “the world would be a better place without you.” Soon after, Meier was dead.

Even after word got out about the fake MySpace account, local police declined to arrest Drew. The Meiers never brought a civil case against her. And when the US attorney’s office in Los Angeles pursued Drew on federal charges under the Computer Fraud and Abuse Act (CFAA), a 1986 cybersecurity bill, the case faltered. Drew eventually walked away a free woman.

The next time these questions emerged in the US, things went a little differently. In 2014, Conrad Roy, an 18-year-old in Massachusetts, died by suicide. His phone revealed years of conversations with a long-distance girlfriend, Michelle Carter, who repeatedly pushed him, via text, to kill himself. Carter, who was 17 at the time of Roy’s death, was subsequently convicted of involuntary manslaughter in a juvenile court and served 11 months in jail.

Based on media reports of Carter’s actions, the desire to punish her made sense, says Mark Tunick, a political theorist at Florida Atlantic University. But in Texting, Suicide, and the Law, Tunick argues that the two categories of manslaughter in Massachusetts—by omission (or failure to intervene) and commission (harm caused by recklessness)—did not apply to Carter. For one, the teen did not have a responsibility to protect Roy in the way a parent or doctor would. More importantly, the court could not prove that Carter caused Roy’s death.

Many legal theorists more or less agreed. In US courts, the question of cause is often determined by the “but-for” test: But for Professor Plum bashing Mrs. Peacock over the head with the candlestick in the conservatory, Mrs. Peacock would still be alive. Because suicide is traditionally seen as the voluntary act of an individual, courts have typically considered any other chain of causation broken in those decisive final moments. Carter could have sent all the texts she wanted, but in this line of thinking, the real “but for” were Roy’s own actions.

The power of social contagion further complicates notions of personal responsibility for suicide. It may be the action of an individual, but suicide is “a social disorder for the most part,” says David Fink, a social epidemiologist at the New York State Psychiatric Institute. Methods and rationales vary widely across time and culture. Economic factors seem to have a profound effect on suicide rates. Now, doctors and scientists are grappling with social media’s role in disseminating harmful ideas.

Since at least the 1970s, epidemiologists have shown that exposure to suicide—whether the death of a friend or family member, or through mass media—raises a person’s risk of suicidal thoughts or actions. Against this backdrop, blaming the individual feels both counterproductive and incomplete.

Yet researchers have struggled to identify a clear mechanism by which these ideas might spread. Part of the problem comes down to the methods available to researchers, says Seth Abrutyn, a sociologist at the University of British Columbia.

On one end of the spectrum are suicides that cluster between close ties, like those formed among incarcerated people, high school students, and Native American youth. To understand if and how one suicide in the community triggered others, researchers like Abrutyn conduct in-depth interviews with those who are still alive. They’ve found that the spread of suicidal thoughts and behaviors is less about “contagion” than education: Like learning to play chess or taking up smoking, people who die by suicide appear to teach those around them a new way to think about their distress, the means for suicide, and more.

On the other end of the spectrum are suicides that spread out with less force but over a wider network, as in the aftermath of a celebrity’s suicide. Such cases are studied using statistical methods that look for fluctuations in the number of suicides one would expect based on data in a given year. In the months after the actor Robin Williams’ high-profile suicide, for example, researchers found 10 percent more suicides than expected—likely the result of extensive media coverage.

But both of these methods come up short at answering some of the most fundamental questions about suicide. Interviews are limited both by the accuracy of self-reports and the fact that many people in a suicide cluster are no longer alive to share their stories. While statistical methods can prove that narratives about suicide matter, they don’t offer much insight into how these messages can be changed for the better.

In recent years, it’s become clear that social media sits between these two established extremes, and the data needed to fill in this gray area belong to the very companies who hope to obscure their influence on users. While TikTok, Instagram, Facebook, Twitter, and other platforms are explicit in their aim of fostering close ties over vast distances, they are averse to any independent analysis of the consequences. That makes studying suicide contagion over digital networks effectively impossible.

Suicidologists also know that attempts to attribute blame for suicide can backfire in spectacular ways. Abrutyn’s research suggests that the way we talk about suicide is a vector onto itself. In a 2019 study of one community with a spate of youth suicides, Abrutyn and his colleagues showed that rationalizing these deaths as the desire of students to “escape” issues like “school stress” seemed to teach other students with similar challenges that suicide was an option for them, too.

It’s easy to extend this logic to Russell’s case: If everyone agrees depressing social media posts can drive a person to suicide, it stands to reason more people will die with this rationale in mind. But by the same token, Abrutyn says the right narrative can also reduce the risk of suicidal thoughts or behaviors. Finding ways to talk about individual and community resilience, and the availability of resources for people who are grappling with suicidal ideation, can in fact be protective.

We may never be able to say to everyone’s satisfaction that social media caused Molly Russell’s death, or anyone else’s. But that doesn’t mean we can’t work to prevent future harm.

In the US, speech encouraging suicide could be explicitly outlawed through new legislation. While the scholarship on this topic is still in its infancy, the lawyer Nicholas LaPalme has proposed a new framework called “overwhelming the will.” This standard would recognize “how potent” someone like Carter’s “words are when his or her victim is already battling with depression.” If courts recognized that someone could overwhelm the will of another, the chain of causation could remain intact “because the victim did not have the mental capacity at the time to choose” to resist the other person’s words.

Setting aside the contentious issue of “mental capacity” and autonomy, the framework of “overwhelming the will” could easily extend to cases like Russell’s. A decade ago, it was easy enough to brush off a site like Facebook as a neutral tool that individual users wielded for good or for ill. Today, however, one could certainly argue that algorithmic platforms “overwhelm the will” of users by identifying their weaknesses and targeting them with content that makes them engage more and feel worse.

The question is, do Americans want to live with the repercussions of laws like these?

If this type of content were illegal, platforms would need to devise ways to prove their algorithms were not pushing users deeper into depression and suicidality. But it’s important to acknowledge that these companies would likely use morealgorithms to scrub their platforms of any potentially-criminal content. “Algorithms do not do gray areas very well,” says Tony D. Sampson, a digital cultures researcher at the University of East London. So whatever filter resulted would be incredibly coarse; if everything suicide-adjacent were banned, an article like this one might not make it through. Without more insight into the mechanism of suicide contagion, there’s no real way to make these instruments more precise, either.

Given these constraints, legislation might do better to focus on compelling social media companies to reengineer their recommendation systems and incrementally remove harmful content from search—instead of criminalizing the purported consequences of these posts. Crucially, governments must also compel social media companies to turn data over to independent researchers, who can use their findings to guide more precise bans on content and perhaps even identify positive ways that these platforms can support users struggling with suicidal thoughts or behaviors.

Politicizing deaths like Meier’s, Roy’s, or Russell’s can have immense value—but only insofar as they help people in similar circumstances. For now, it might be more helpful to gather the evidence we need for an informed conversation.

All Rights Reserved for Eleanor Cummins